Because it's what I do, I've been looking at some midcore F2P mobile games using my Popularity, Success and Monetisation Efficiency ratios.

It's been an interesting process - one that reveals the strengths and weakness of the system.

Scores on the doors

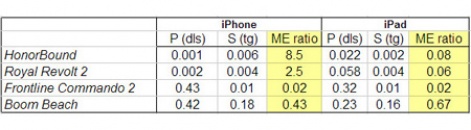

If we consider the P for Popularity metric (no. of countries a game goes top 10 dividied by the no. of top 100s on the free download chart, all indexed to the game's position on the US chart), we can see a strong variation.

Games from big developers like Glu Mobile (Frontline Commando 2: check out its Monetizer) and Supercell (Boom Beach) now find it relatively easy to generate a lot of downloads.

They score 0.42 and 0.43 out of a possible total of 1.0, because those companies have strong user acquisition expertise and the money to back it up.

Interestingly, note that both games score higher on iPhone than iPad, despite all these games being more iPad friendly in terms of user experience. This is likely because there's more advertising volume and general activity on iPhone than iPad.

If we compare the performance of two high quality games from small developers, however, we can see there's a considerable difference - two orders of magnitude.

In this case Flaregames (Royal Revolt 2: check out its Monetizer) and JuiceBox (HonorBound) are clearly talented at developing games but lack expertise, cash and connections in terms of generating a lot of downloads.

However, both games do better on iPad compared to iPhone (0.022 vs 0.001 and 0.058 vs 0.002) because of the better virality their games receive from the more considered iPad audience.

Moving onto our S for Success metric, which is similar to Popularity but looking at top grossing chart performance, all four games score much lower. But this is what we'd expect as the download charts are highly volatile while the top grossing charts are now static.

Only Boom Beach has performed very well, although we'd count Frontline Commando 2 as being a borderline commercial success (with a score of 0.01: we count 0.01 and above as a success).

The two 'indie' games perform very badly commercially in comparison.

Cash in the hand

The final metric is the most interesting.

The Monetisation Efficiency ratio is simply created as Popularity/Success. For this reason, however, it's highly biased to a high or low rating in either of the ratio's nominators.

For example, Boom Beach scores very highly in terms of Popularity so its ME is less than one, purely because Supercell has been able to generate a lot of downloads.

In contrast, we can see that while Frontline Commando 2 generates a similar 'amount' of downloads (this is a relative not absolute measure), its top grossing performance was weak, so its ME is an order of magnitude reduced compared to Boom Beach.

On that basic, Boom Beach is a more successful game, but in this case that's controlled by the Success number not the overall ME number

The two indie games score very highly, however.

This is because that relative to their downloads, their top grossing chart position, while weak, is relatively much better, albeit it only on iPhone. On iPad, where they score higher on downloads, their top grossing performance was equal or worse.

So, is this just another case of damn lies and statistics?

I'd humbly suggest no. By choosing simple ratios, we can see why these numbers are different and gain an understanding of the different influences.

And we've learned we can't directly compare games using these numbers. To that extent, we're learning the limitations of the system, but we're also gaining insights into the relative performance of games - which is a very valuable thing.