AI-based tools developer Spirit AI has revealed a slew of updates to its social behaviour tool Ally.

The tool is designed to keep online forums amicable and does so by considering context, nuance and the relationships between users versus seeking and blocking keywords.

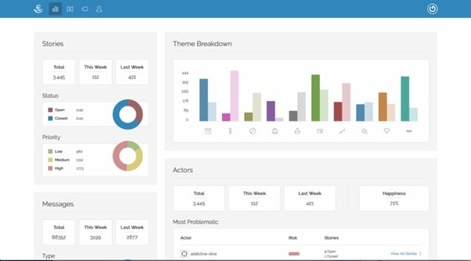

It then conveys the information to customer service and data science teams so they can understand the general tenor of the online community and predict problems before they escalate.

Among the host of new features include smart filters, an updated and customizable web-based front end and intuitive node-based interface.

New features also include a bot detection that looks at every message and finds patterns in language that reveal the culprits.

Blockin’ out the haters

“Online harassment has increasingly become an issue, both in-game and in other online forums, but it’s a challenging problem to solve," said Spirit AI creative partnerships director Mitu Khandaker.

"Not all communities have the same culture, rules, and norms, so one-size-fits-all solutions aren’t the best answer.

“It’s important to be able to consider the context; to be able to discern harassment from smack talk, in order foster safe, healthy communities. Ally looks beyond language to context and behaviour to provide intelligence to help head off trouble.”

Get the latest news, interviews and in-depth analysis on Twitter, Facebook and our daily newsletter.